Digital platforms are funding disinformation and their own opacity prevents the phenomenon from being fully studied · Maldita.es

Creators of disinformation have different motivations: some seek ideological gain, others do it simply to sow confusion, but there are also many for whom disinformation is a way to make money. They know that disinformative content, almost always emotional and eye-catching, is a reliable way to attract public attention. They also know that the recommendation algorithms of major digital platforms amplify precisely this type of content, giving it the visibility needed to generate revenue.

Within this group are those who exploit the monetization programs of large platforms, in which companies such as YouTube, TikTok, Facebook, Instagram, or X pay content creators by granting them, for example, a share of the advertising revenue that the platform earns by placing ads in their videos. Most platforms have rules that prohibit the monetization of disinformative content, but at Fundación Maldita.es we have shown that at least TikTok and YouTube do not comply with them. In practice, they are funding disinformation.

How effective would certain disinformative content be without these monetization models that reward it? What relationship exists between this economic incentive and the promotion that this type of content receives from platform recommendation algorithms? Is there a connection between the economic gains of disinformation creators and how they contribute to the economic gains of the platforms themselves? Many of these questions are difficult to answer in detail because only the platforms have the data on which users they are funding, and they refuse to share it.

Generating income while spreading disinformation about issues such as the climate

An analysis by Fundación Maldita.es on YouTube identified 20 channels with more than 21 million subscribers that spread debunked climate disinformation. Despite this, all of them include advertising in their videos, contrary to YouTube’s own rules, which indicates that the platform is providing them with a share of the revenue generated by those ads.

On TikTok, several of the accounts investigated by Fundación Maldita.es for generating AI-based videos of demonstrations in order to increase followers and access monetization programs also shared videos about climate phenomena. For example, one profile with more than 38,000 followers posted more than 40 synthetic videos after the snowfall in Russia in January 2026. Other accounts published videos about flooding caused by rains in Gaza.

More than 60 accounts on X that shared hoaxes debunked by Maldita.es about the floods in Valencia following the DANA had the blue checkmark for being part of X Premium, one of the requirements to monetize on this platform. The same X Premium badge was also displayed by some accounts that were part of a campaign which, after the same environmental disaster, posted and replied massively to other tweets, including disinformative or violent messages.

Although X does not have any rules on this matter, many other platforms do set restrictions on monetizing this climate disinformation. For example, Meta states that it may demonetize accounts that repeatedly publish content rated as false by independent fact-checkers. On TikTok, content that violates community guidelines, among which climate disinformation is included, cannot theoretically be monetized either. On YouTube, monetization of messages that contradict the scientific consensus on climate change is prohibited. Despite this, we have documented cases on several platforms where these rules are not being enforced or do not cover the full scope of the phenomenon.

These programs are a risk factor according to the DSA

Systems that reward a creator based on the engagement their content receives can become a risk factor when the most eye-catching or controversial content generates higher income. Disinformation about climate-related issues or events can have serious consequences for public safety if it leads to decisions not based on facts, in addition to preventing people from accessing reliable information and fully exercising their constitutional right to receive truthful information.

According to the EU’s Digital Services Act (DSA), large online platforms must prevent these risks when their design or operation facilitates the spread of content that causes them. In other words, if these platform creator revenue programs are incentivizing or rewarding the spread of climate disinformation, platforms must assess this risk and propose measures to limit its impact.

Available data on monetization is almost nonexistent

Investigating this phenomenon in depth is difficult. In the cases documented by Fundación Maldita.es, we can assume the existence of monetization based on certain characteristics of the content or profile and on publicly available information about how platform revenue programs operate. This is practically the only option, since platforms generally do not make this key information public regarding which posts or accounts are being monetized and to what extent.

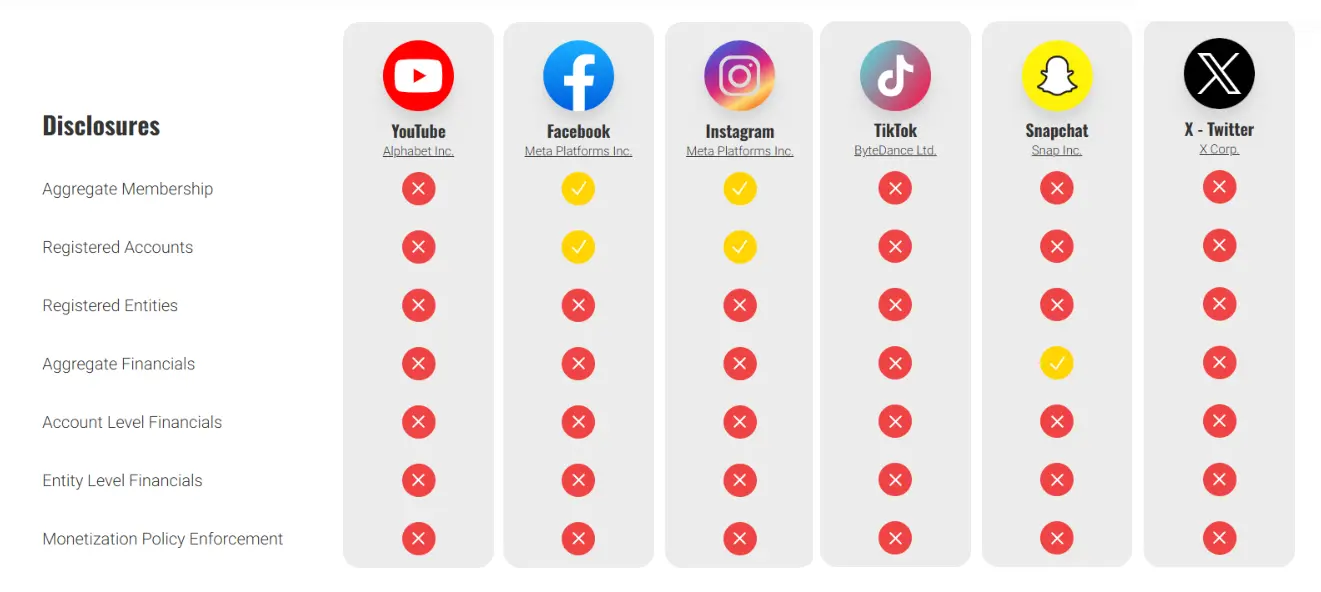

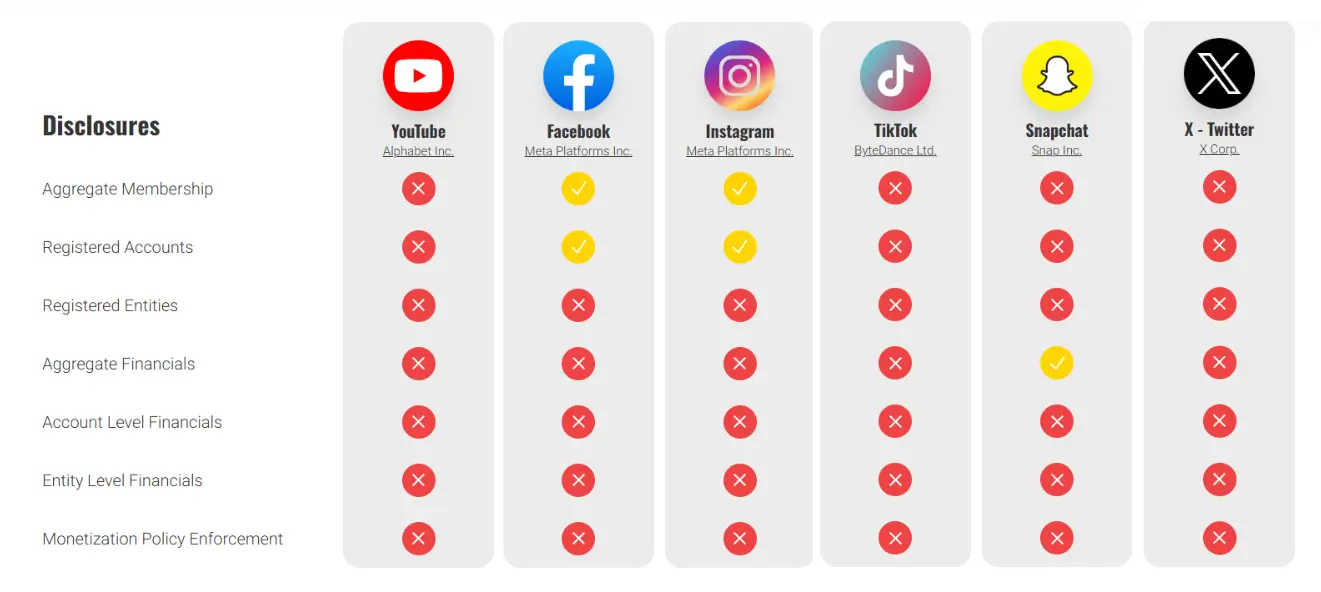

Only Meta’s platforms provide minimal information about the accounts that are part of the program and the total number of registered users. Meanwhile, Snapchat reports the total amount of revenue distributed among creators. The organization What To Fix shows the transparency comparison:

This lack of transparency makes it extremely difficult for organizations such as Fundación Maldita.es to independently assess these economic incentives and how they are actually influencing disinformation on platforms.

Recommendations from Fundación Maldita.es

Despite not being a new phenomenon, major digital platforms currently play a key role in amplifying, encouraging, and funding disinformation, increasing the scale of the problem.

Platforms must face their legal responsibility not to incentivize the spread of disinformation not only through clear rules that define which content they remunerate and which they do not, but also by ensuring they have the capacity to enforce these rules effectively.

Many of these platforms have rules on the disclosure of paid partnerships so that users are aware when consuming content. This same principle should be applied to payments coming from the platforms themselves, and this information should be accessible to users within their interfaces. In addition, access to this data should be facilitated for accredited researchers in order to assess potential risks.

On the other hand, the relevant authorities must ensure compliance with the Digital Services Act by undertaking the necessary investigations to confirm certain breaches by major platforms and ensuring that these breaches are corrected. In particular:

-

The existence of adequate measures to reduce the risk of abuse of algorithmic recommendation systems and monetization programs to produce and distribute disinformation.

-

The availability of the data held by platforms regarding their monetization programs, ensuring that requests for access by researchers seeking to conduct systemic risk studies are not arbitrarily denied.

-

The effective enforcement of the platform’s internal rules in this area, as these rules constitute a commitment to their users.