Digital platforms are funding disinformation and their own opacity prevents the phenomenon from being fully studied · Maldita.es

Creators of disinformation have completely different motivations: some search ideological achieve, others do it merely to sow confusion, however there are additionally many for whom disinformation is a strategy to earn cash. They know that disinformative content material, virtually all the time emotional and crowd pleasing, is a dependable strategy to appeal to public consideration. In addition they know that the advice algorithms of main digital platforms amplify exactly this kind of content material, giving it the visibility wanted to generate income.

Inside this group are those that exploit the monetization applications of huge platforms, wherein firms comparable to YouTube, TikTok, Fb, Instagram, or X pay content material creators by granting them, for instance, a share of the promoting income that the platform earns by putting advertisements of their movies. Most platforms have guidelines that prohibit the monetization of disinformative content material, however at Fundación Maldita.es we’ve got proven that not less than TikTok and YouTube don’t adjust to them. In apply, they’re funding disinformation.

How efficient would sure disinformative content material be with out these monetization fashions that reward it? What relationship exists between this financial incentive and the promotion that this kind of content material receives from platform advice algorithms? Is there a connection between the financial positive factors of disinformation creators and the way they contribute to the financial positive factors of the platforms themselves? Many of those questions are troublesome to reply intimately as a result of solely the platforms have the info on which customers they’re funding, and so they refuse to share it.

Producing revenue whereas spreading disinformation about points such because the local weather

An analysis by Fundación Maldita.es on YouTube recognized 20 channels with greater than 21 million subscribers that unfold debunked local weather disinformation. Regardless of this, all of them embody promoting of their movies, opposite to YouTube’s personal guidelines, which signifies that the platform is offering them with a share of the income generated by these advertisements.

On TikTok, a number of of the accounts investigated by Fundación Maldita.es for producing AI-based movies of demonstrations so as to extend followers and entry monetization applications additionally shared movies about local weather phenomena. For instance, one profile with greater than 38,000 followers posted greater than 40 artificial movies after the snowfall in Russia in January 2026. Other accounts revealed movies about flooding caused by rains in Gaza.

More than 60 accounts on X that shared hoaxes debunked by Maldita.es in regards to the floods in Valencia following the DANA had the blue checkmark for being a part of X Premium, one of many necessities to monetize on this platform. The identical X Premium badge was additionally displayed by some accounts that had been a part of a marketing campaign which, after the identical environmental catastrophe, posted and replied massively to other tweets, including disinformative or violent messages.

Though X doesn’t have any guidelines on this matter, many other platforms do set restrictions on monetizing this climate disinformation. For instance, Meta states that it could demonetize accounts that repeatedly publish content material rated as false by unbiased fact-checkers. On TikTok, content material that violates neighborhood pointers, amongst which local weather disinformation is included, can not theoretically be monetized both. On YouTube, monetization of messages that contradict the scientific consensus on local weather change is prohibited. Regardless of this, we’ve got documented instances on a number of platforms the place these guidelines are not being enforced or don’t cowl the total scope of the phenomenon.

These applications are a danger issue based on the DSA

Programs that reward a creator based mostly on the engagement their content material receives can turn into a danger issue when probably the most eye-catching or controversial content material generates greater revenue. Disinformation about climate-related points or occasions can have severe penalties for public security if it results in selections not based mostly on info, along with stopping individuals from accessing dependable data and totally exercising their constitutional proper to obtain truthful data.

Based on the EU’s Digital Services Act (DSA), giant on-line platforms should forestall these dangers when their design or operation facilitates the unfold of content material that causes them. In different phrases, if these platform creator income applications are incentivizing or rewarding the unfold of local weather disinformation, platforms should assess this danger and suggest measures to restrict its affect.

Out there information on monetization is sort of nonexistent

Investigating this phenomenon in depth is troublesome. Within the instances documented by Fundación Maldita.es, we will assume the existence of monetization based mostly on sure traits of the content material or profile and on publicly accessible details about how platform income applications function. That is virtually the one possibility, since platforms typically don’t make this key data public concerning which posts or accounts are being monetized and to what extent.

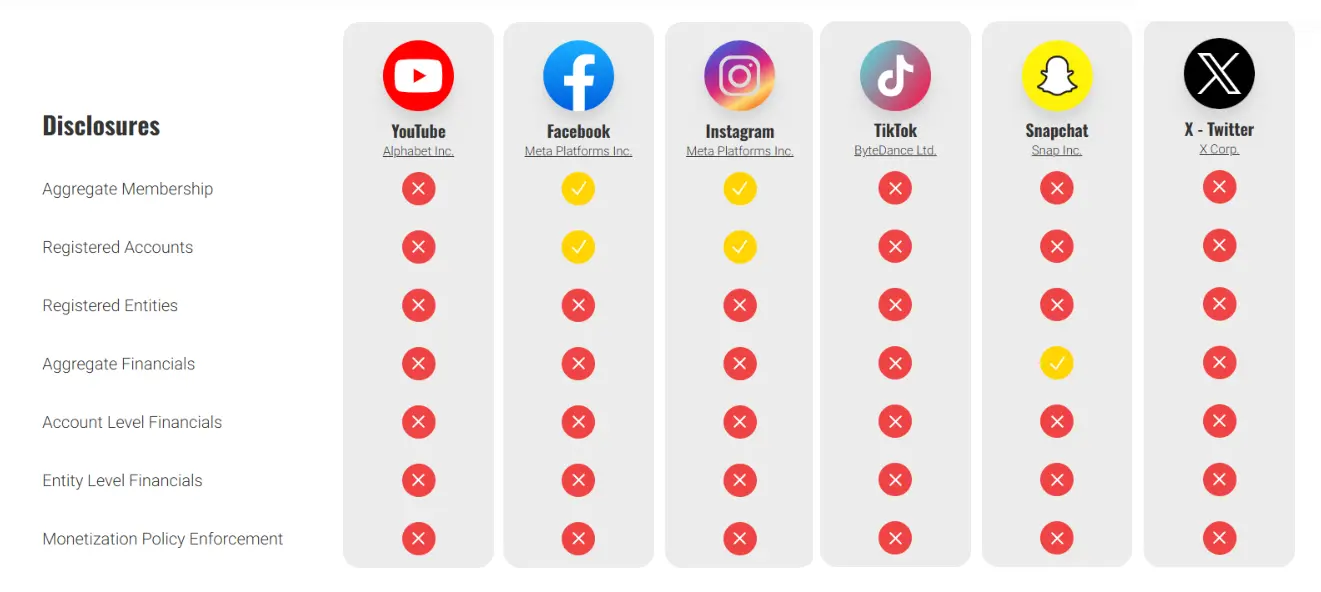

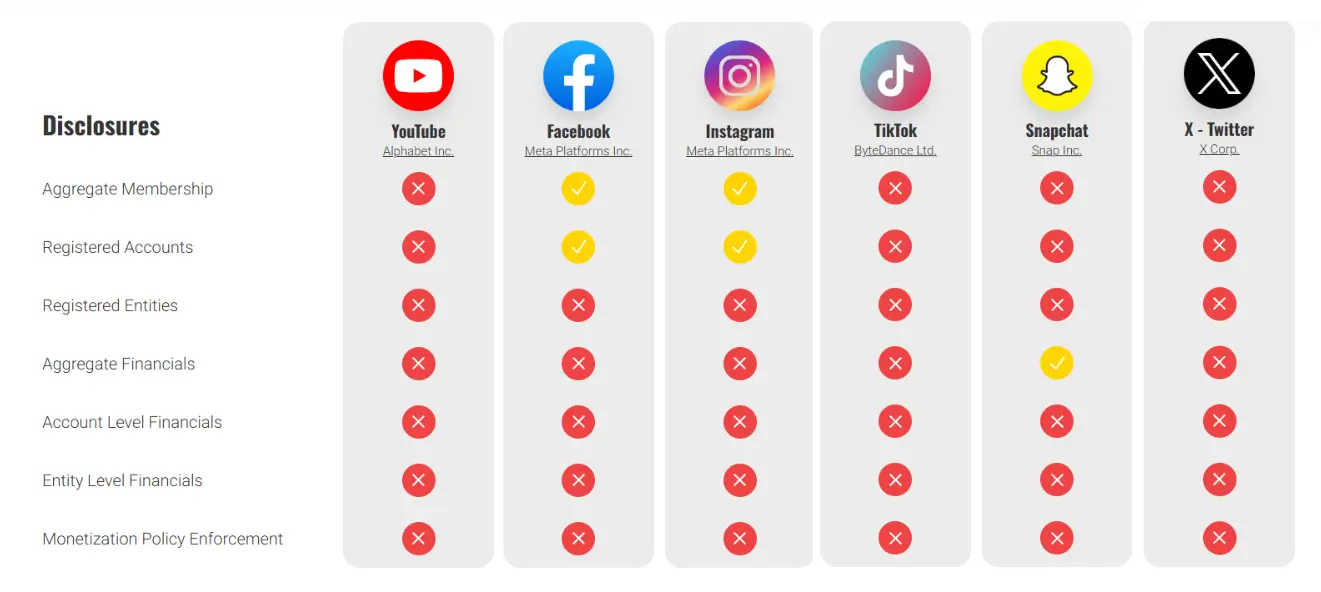

Solely Meta’s platforms present minimal details about the accounts which might be a part of this system and the entire variety of registered customers. In the meantime, Snapchat experiences the entire quantity of income distributed amongst creators. The organization What To Fix shows the transparency comparison:

This lack of transparency makes it extraordinarily troublesome for organizations comparable to Fundación Maldita.es to independently assess these financial incentives and the way they’re truly influencing disinformation on platforms.

Suggestions from Fundación Maldita.es

Regardless of not being a brand new phenomenon, main digital platforms at present play a key function in amplifying, encouraging, and funding disinformation, growing the dimensions of the issue.

Platforms should face their obligation to not incentivize the unfold of disinformation not solely by way of clear guidelines that outline which content material they remunerate and which they don’t, but in addition by making certain they’ve the capability to implement these guidelines successfully.

Many of those platforms have guidelines on the disclosure of paid partnerships in order that customers are conscious when consuming content material. This identical precept ought to be utilized to funds coming from the platforms themselves, and this data ought to be accessible to customers inside their interfaces. As well as, entry to this information ought to be facilitated for accredited researchers with the intention to assess potential dangers.

However, the related authorities should guarantee compliance with the Digital Providers Act by endeavor the required investigations to verify sure breaches by main platforms and making certain that these breaches are corrected. Particularly:

-

The existence of sufficient measures to scale back the chance of abuse of algorithmic advice methods and monetization applications to supply and distribute disinformation.

-

The supply of the info held by platforms concerning their monetization applications, making certain that requests for entry by researchers looking for to conduct systemic danger research usually are not arbitrarily denied.

-

The efficient enforcement of the platform’s inside guidelines on this space, as these guidelines represent a dedication to their customers.